New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Unexpected memory usage #2783

Comments

|

See #1997 and related pull requests. Thanks for your reporting and hope someone can solve it. |

|

Hi, maybe the bug has something in common with the problem related in this comment ? |

|

@loic-couharde, thanks for a very helpful comment here. Seems the issue was with follow redirects, if we bump this to the latest version I think we should be ok. Follow progress over at #2689 |

|

@maurocchi @jasonsaayman I can with 99% certainty confirm this. We upgraded a deamon worker yesterday that uses axios heavily. Since then, we have a memory leak with the classic saw pattern. |

|

@tnolet I have just run the above test again using axios 0.20.0-0. |

|

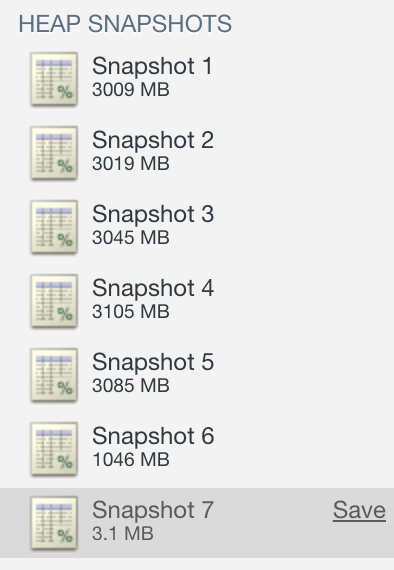

@maurocchi I'm not sure the code you provided is demonstrating a memory leak. You set follow-redirect's max buffer to ~9000 terabytes and then make a single request that writes random data at a high rate for 60s. follow-redirects buffers the request data in memory in case it needs to be replayed on the next 3xx. Growing memory usage for a never-ending request is what I would expect. Comparing to native The question to ask is, over time after multiple large requests, are objects left in memory that are un-GCable? If not, are we seeing undesirable GC behavior and is there a way to prevent that? Based on @tnolet's graph, I think it's more likely undesirable GC behavior and not a leak, meaning eventually the GC kicks into gear and memory is freed. Here's a test I made that posts 20GB over 20~ requests. Memory gets high because the requests allocate 1GB each, but eventually settles to 3MB~. const axios = require("axios").default;

const randomBytes = require('crypto').randomBytes;

const followRedirects = require("follow-redirects");

followRedirects.maxBodyLength = Number.MAX_SAFE_INTEGER;

const sendAbout = 1024 * 1024 * 1000 * 20; // 20GB

let totalSent = 0;

(async function(){

while(totalSent < sendAbout){

try {

const opts = {

method: 'post',

url: "http://localhost:8585/test-data",

headers: {

'content-type': 'application/octet-stream'

},

data: randomBytes(1024 * 1024 * (Math.random() * 100 + 900))

};

console.log(`sending: ${opts.data.byteLength} bytes`);

const res = await axios(opts);

totalSent += opts.data.byteLength;

console.log(`progress: ${((totalSent / sendAbout) * 100).toFixed(2)}%`);

}

catch(err){

console.log(err.message);

}

}

console.log("done");

// force new execution context

setInterval(function(){

console.log(new Date());

}, 10000);

})();Test server: const express = require('express');

const rawParser = require('body-parser').raw;

const app = express();

// dead end for data

app.post('/test-data', rawParser({limit: '2GB'}), (req, res) => {

console.log(`parsed: ${req.body.length} bytes`);

res.send();

});

app.listen(8585, () => {

console.log("server listening");

});There may be a trick to get the GC to clean up sooner, but it tries to balance large memory allocations, run time, processor usage, etc. |

|

So what I assumed to be a memory leak was the expected behavior of follow-redirects buffering mechanisms! In fact this problem disappears by disabling the following of redirects: Thanks @westhom! |

|

@jasonsaayman ignore my contribution. This was the ‘debug’ library of all things! |

When uploading a file the complete contents of the file is loaded in memory just in case the request ends up with a redirect response and the request has to be resent. see axios/axios#2783

When uploading a file the complete contents of the file is loaded in memory just in case the request ends up with a redirect response and the request has to be resent. see axios/axios#2783

Works for me with this solution. I can fix high usage of memory. Before the fix the usage of memory was around 300MB when finished the send a video (220MB~). Thanks @westhom @enerdgumen ! |

Describe the bug

I observe a potential memory leak while posting data from a stream using Axios.

To Reproduce

Having a fake server (

nc -kl localhost 5000), let's profile this simple script:mprof run -C -T1 timeout 60 node test_axios.js < /dev/urandomThis produces the following chart:

Expected behavior

Then consider the following equivalent axios-agnostic script:

It produces:

Here the memory is properly garbaged.

Environment:

The text was updated successfully, but these errors were encountered: